"tight wifi" sounds like marketing BS. Any chance of a link to the company so I can read up on it?

Long tech rambling follows.

Energy propagation is ruled by the

inverse square law. This states that the amount of energy delivered over distance is inversely proportional to the distance. This is an absolute fact that is easily proven, and it's true for light, radio, gamma waves, lasers, masers, sound, and every other form of energy that is radiated.

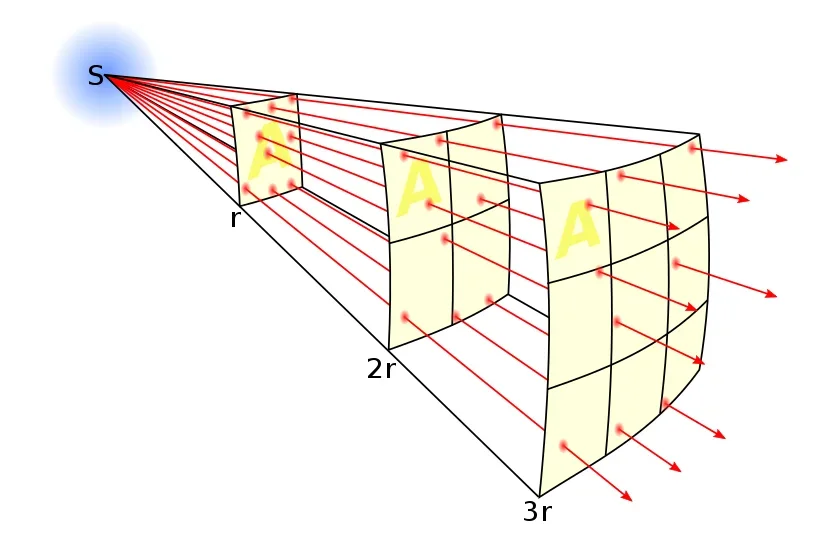

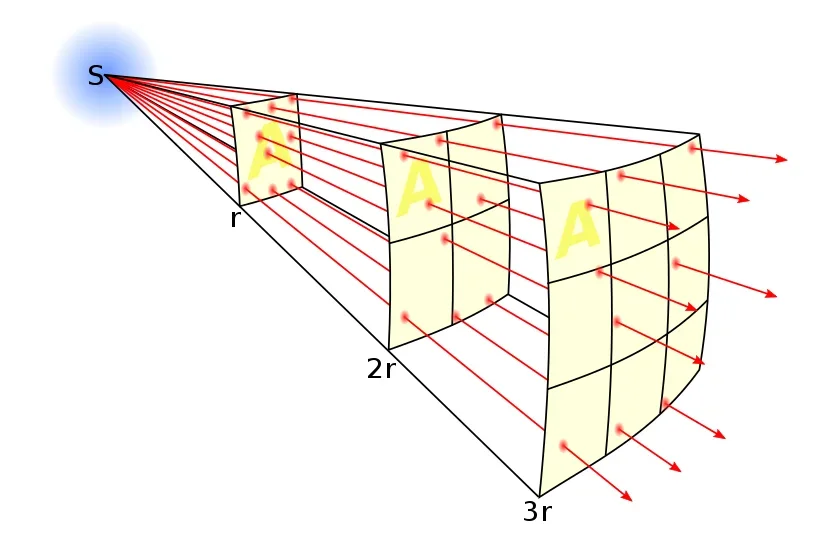

For anyone who doesn't like math, here is a useful pic from Wikipedia:

In this drawing if we consider r to be the prime distance measured (let's say one foot) then 2r (two feet) means that for the same area measured at r (one square foot) the energy dissipated by 1/4th. 1/4th is the inverse square of 2 (2 squared is 4, so the inverse is 1/4th). At three times the distance (3r = three feet) then the same area (1sqft) receives 1/9th the energy.

This inverse square law means it's *very* difficult to send signals over long distances - and that's not considering things that interfere with signals like buildings, mountains, etc. You know how a flashlight becomes useless over distance? Inverse square law. You know how modern LED flashlights work better over distance? They output more power.

Different frequencies propagate differently. Long wave frequencies like the type used AM radio - or even longer waves ike those used in short wave radio naturally propagate for long distances and can even be bounced off of the ionosphere.

Higher frequencies don't propagate as well, which is why things like VHF and UHF (like traditional TV signals) are pretty much line of site which is why TV stations were local to the cities from which they were transmitted.

Extremely high frequencies like microwaves and even up to cosmic rays are very energetic and can pass through things , with cosmic rays being able to pass through the Earth and have been measured in deep caverns underground.

The traditional way to overcome the signal weakening over distance due to the inverse square law is with power. This is why AM radio stations used to advertise

50,000 watts of power!, because increased power at the base meant that their listening area was wider and so could reach more people.

Remember CB radios? CB radios are actually 11-meter shortwave radios but they had limited range because the FCC limited them to 5 watts (later lowered to 4W) in an effort to keep them from interfering with other services. A typical ham radio operating in the HF (high frequency, which is actually "short wave" which is a whole different topic) are usually 100W and with a 100W transceiver I can make contacts all over the country (and sometimes the world) depending on atmospheric conditions and antenna design. As an Extra class licensee I can run up to 1500W. With a 1500W VHF rig and a well-tuned and aimed antenna (or even better an antenna array) I can bounce signals off the moon and hear the very weak reflections seconds later.

Back to Wifi, the wifi in your house is limited to 4W on 2.4 GHz and 1W at 5GHz (It's a bit more complicated than that but those wattages are correct).

Cell phones are limited to 3W, but they almost never use that much. Have you ever noticed that when you don't have a signal your battery dies faster? That is because when your phone can't find a digital signal it reverts to analog, and analog cell phones require a lot of power. Old cell phones were analog but all modern cell phones for probably the last 15-20 years or so are digital. Digital signals require substantially lower power levels than analog. What's more, cell phones are so smart and the processing on the towers is so smart that your phone automatically adjusts to use the lowest power it needs at all times.

Finally, "radiation" from cell phones (electromagnetic) is completely different from radiation from uranium (ionizing) and a lot of people don't understand that. The radiator in a house emits radiation but no one complains about that!

X-Rays and Gamma-Rays (both electromagnetic) are dangerous (and useful) because they pass through the body. Microwaves don't, which is why they heat food in ovens with the same name. Danger from a Microwaves is the same risk for standing near a powerful ham radio antenna: if you're too close and/or the power is too high you will get burned or even cooked. Microwave ovens are small because of the inverse square law, and most microwave ovens are 800-1500 watts! If it takes 1500 watts to cook something less than a foot away, so if you put a microwave on top of a cell phone tower, rigged it to run while open, and watched it from a mile away would it hurt you? The answer is no, because of the inverse square law.

Cell towers have much lower powered transmitters than radio or TV stations because of their design - a cell only communicates with clients in a small area then hands off the client to a different cell as it moves. Modern mesh Wifi works much the same way.